An autonomous quadruped robot inspired by Boston Dynamics' Spot, designed and built from the ground up. The robot features real-time LIDAR-based navigation, sensor fusion algorithms, and AI-powered obstacle avoidance capabilities.

Project Highlights

✓ Real-time LIDAR mapping

✓ Autonomous navigation

✓ Sensor fusion algorithms

✓ Custom control software

✓ Obstacle detection & avoidance

✓ Commercially viable design

- PlatformNVIDIA Jetson Nano

- StackPython, TensorFlow, PyBullet, ROS, Adafruit

- SensorsLIDAR, IMU, Camera

- SimulationRobot Dog Simulation Repository

- SoftwareRobot Dog Software Repository

📌 Note on Source Code

The main repository is private as this project represents significant development effort and has commercial potential. If you are interested in learning more about the technical details or potential collaboration, please contact me via email.

Technical Architecture

The robot is powered by an NVIDIA Jetson Nano, providing sufficient computational power for real-time sensor processing and AI inference. The LIDAR sensor creates a 2D map of the environment, which is processed through custom SLAM algorithms to enable autonomous navigation.

Key Technical Achievements

- Sensor Fusion: Integrated data from multiple sensors (LIDAR, IMU, encoders) to create a robust state estimation system

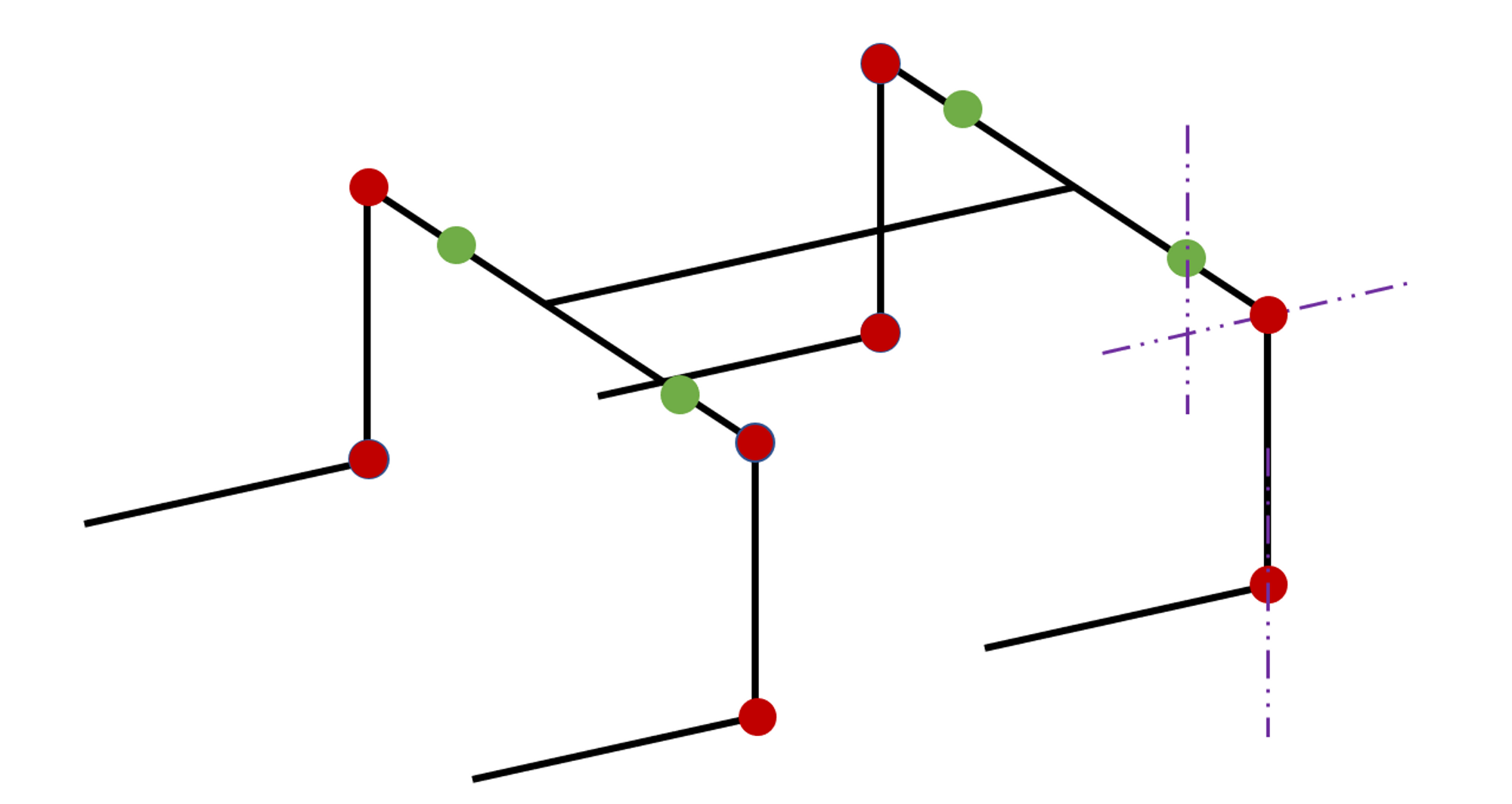

- Gait Control: Implemented smooth walking gaits using inverse kinematics and trajectory planning

- Obstacle Avoidance: Real-time obstacle detection and path replanning using LIDAR point cloud data

- Simulation Environment: Created a PyBullet simulation for testing algorithms before deployment on hardware